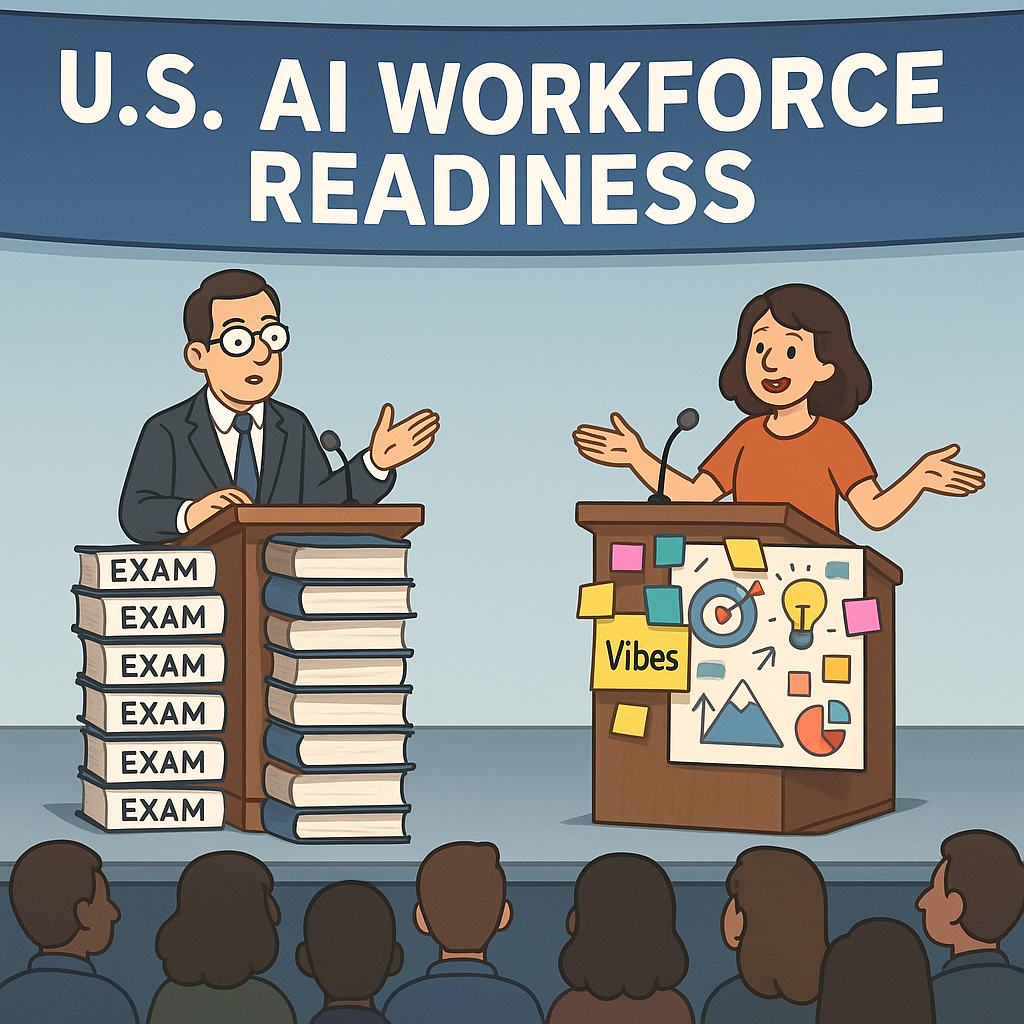

In a devastating blow to everyone whose job title contains the words “AI” and “vibes” but no actual technical skills, EC-Council has announced it is expanding its AI certification portfolio to “strengthen U.S. AI workforce readiness and security” (GlobeNewswire_fr, Feb 2026). In other words: the era of winging it on LinkedIn is under coordinated attack.

The cybersecurity training giant EC-Council, best known for creating Certified Ethical Hackers and ruining weekends for thousands of people cramming for multiple-choice exams, is now coming for the booming cottage industry of people who put “Prompt Engineer” in their bio after watching a 9-minute YouTube video. The new AI certification programs promise to teach real skills around AI governance, security, and implementation, instead of the current market standard: “Be confident, say ‘LLM’ a lot, and hope nobody asks follow-ups.”

“We saw a gap between actual AI security and whatever that dude in your coworking space is doing with a Notion template,” a fictional but emotionally accurate EC-Council spokesperson explained. “So we decided to build structured pathways for the U.S. AI workforce. Also, we were running out of acronyms, and AI gives us like 40 new ones a week.”

According to EC-Council, the expanded AI certification portfolio will cover areas like AI governance, secure deployment, ethical use, and defending organizations against models that have been ‘helpfully’ fine-tuned by interns. The goal is to create an AI workforce that can handle both cyber threats and, more importantly, the 47 contradictory AI policies legal just emailed as a single PDF titled Final_V12_REAL_Final.pdf.

Corporate America, naturally, is thrilled. A training manager at a Fortune 500 company, speaking on condition of anonymity to avoid being invited to more meetings, said: “Right now, our ‘AI readiness’ strategy is just buying more licenses and hoping ChatGPT writes our SOC2 report. If EC-Council can give me three people who actually know what they’re doing, I will personally knit them commemorative hoodies.”

The U.S. AI workforce, however, is deeply bifurcated. On one side, there are engineers and security professionals who hear ‘AI security’ and think about model poisoning, data exfiltration, and adversarial attacks against neural networks. On the other, there are people named Kyle who hear ‘AI security’ and assume it’s about making sure Midjourney doesn’t generate their ex’s new boyfriend hotter than they are.

“I don’t really see why we need more certifications,” said a self-described ‘AI Business Alchemist’ in Austin, who offers $997 mastermind retreats on how to build a ‘7-figure AI agency’ without ever learning what an API is. “My clients don’t want security. They want scalable alignment with their higher self and recurring revenue. Also, my Stripe account got hacked last week, can you blur that part?”

EC-Council’s move lands in a U.S. tech ecosystem that has been treating AI like a combination of crypto, essential oils, and religion. Companies want the productivity boost, regulators want plausible deniability, and everyone else wants to know if they can still charge $400 an hour to ‘translate AI to the C-suite’ using nothing but Canva slides.

To address this, EC-Council is reportedly designing its AI certification exams to filter out what one internal memo allegedly calls “PowerPoint activists.” Rumored exam features include:

- Automatic disqualification if you answer, “It depends on your vibe,” more than once.

- A mandatory question where you must explain AI governance without using the words “journey,” “north star,” or “paradigm.”

- A live-practical lab where you secure a model from prompt injection while a fictional VP of Marketing screams, “Can’t we just ship it?” in the background.

Security professionals are cautiously optimistic. “Look, if EC-Council can get executives to understand that ‘AI security’ is more than enabling SSO and calling it a day, I’ll send them a fruit basket,” said a weary CISO at a large financial institution. “Right now, half the board thinks ‘model risk’ is whether the stock photos in our slides are diverse enough.”

Critically, EC-Council is trying to tie AI security into a broader conversation about U.S. AI workforce readiness – the idea that maybe, just maybe, handing powerful generative models to people who still use ‘password123’ is not a strategic masterstroke. The expanded certification portfolio aims to create professionals who can both secure AI systems and explain to their CEO why feeding proprietary data into a random chatbot labeled ‘FREE, NO ACCOUNT NEEDED’ is a cry for help.

“We’re going from a world where people brag, ‘I got ChatGPT to write my whole policy’ to one where they might actually understand the risks of that sentence,” another EC-Council representative allegedly said. “We love that for them.”

Predictably, not everyone is on board. A shadowy coalition of LinkedIn thought leaders has begun quietly panicking at the idea that companies might start asking for verifiable evidence of AI skills. Inside one invite-only Slack, a leak reveals channel names like #pivot_from_ai, #is_breathwork_recession_proof, and #can_we_rebrand_as_web4.

“EC-Council is gatekeeping the AI revolution,” complained one popular ‘AI for Abundance’ influencer in a 37-minute Reels rant. “We should democratize access to AI knowledge by letting anyone call themselves an expert after they successfully prompt, ‘Write a polite email to my landlord.’ Certifications are a tool of the patriarchy.” She then paused to promote her $2,222 “AI Ascension” program, payable in four interest-free installments.

Meanwhile, actual employers seem relieved at the prospect of a clearer signal amidst the noise. Hiring managers report being inundated with resumes listing skills like “ChatGPT (Advanced)” and “Midjourney (Manifestor Level).” One tech recruiter confessed, “If I see one more bullet point that says ‘Used AI to 10x productivity’ with no details, I’m going to 10x my resignation letter.”

The escalation is already visible: companies are whispering about making EC-Council’s AI certifications preferred for roles like AI Security Engineer, AI Risk Manager, and That One Person Who Actually Reads the Data Processing Agreements. The U.S. AI workforce, sensing the shift, is split into three camps:

- People rushing to get certified.

- People writing LinkedIn posts about how “real innovators don’t need credentials.”

- People quietly googling “What is EC-Council” in an incognito tab.

In wellness circles, the vibe is more existential. As AI becomes formalized, secured, and relentlessly certified, there’s growing concern that the era of free-range nonsense is ending. “If we keep this up,” sighed one life coach turned AI consultant, “soon I’ll actually have to know how transformers work instead of just saying we’re ‘transforming.’ That feels… misaligned with my nervous system.”

Still, EC-Council appears undeterred. Their message is clear: the U.S. can’t afford an AI workforce built solely on vibes, vision boards, and whatever that guy at WeWork mumbled about ‘fine-tuning your brand frequency.’ Someone needs to understand what’s happening under the hood — or at least how to prevent the hood from emailing your customer data to a phishing server in another time zone.

So as EC-Council expands its AI certification portfolio and doubles down on workforce readiness and security, a new era of AI professionalism may be dawning. In response, America’s wellness-infused fake experts are launching their boldest countermeasure yet: a four-week cohort-based course titled, “How to Feel Energetically Certified Without Taking Any Exams.”

The syllabus, naturally, was written by AI.